Sponsored Post: Why is my broadband so slow?

By Edwin Yapp December 13, 2013

MALAYSIA has made great progress in its quest to become a digital nation since it set its sights on doing so with the introduction of the first broadband copper-based service delivered on Asymmetric Digital Subscriber Line (ADSL) technology at the turn of the millennium.

MALAYSIA has made great progress in its quest to become a digital nation since it set its sights on doing so with the introduction of the first broadband copper-based service delivered on Asymmetric Digital Subscriber Line (ADSL) technology at the turn of the millennium.However, it was not until the last five years that broadband truly took off, with the introduction of fibre-to-the-home (FTTH) services, which offered between 100 and 1,000 times more bandwidth than what ADSL was able to bring to consumers and companies.

This is due to the fact that there is virtually no limit to how fast your broadband connectivity can go. Unlike copper-based ADSL systems, which depend on electrical signals to transmit their data, fibre is based on optics, or light transmission.

The key advantage to using light transmission is that it does not suffer any degradation or losses compared with ADSL, which uses electrical signals that are often susceptible to interference and losses, and physically degrades over time. In general, aged ADSL lines have been known to degrade to such a point where they can only transmit about 10% to 20% of their maximum speeds.

Coupled with the age problem is the fact that the speed at which copper-based lines are able to transmit is limited by their distance. Typically, the maximum transmission speeds of copper-based systems tend to taper off at up to 50% of their maximum speed beyond five kilometres from the nearest exchange.

Just think of it this way: One fibre optic strand can transmit speeds in the gigabit range, as opposed to any copper-based system which is only limited to the megabit range. Fibre optics is also free from all of the aforementioned disadvantages that plague copper-based systems.

With FTTH, broadband service providers are no longer constrained by the limitations of ADSL and are able to deliver services to companies and homes easily, at 100 megabits per second, a measurement of capacity known as the bandwidth.

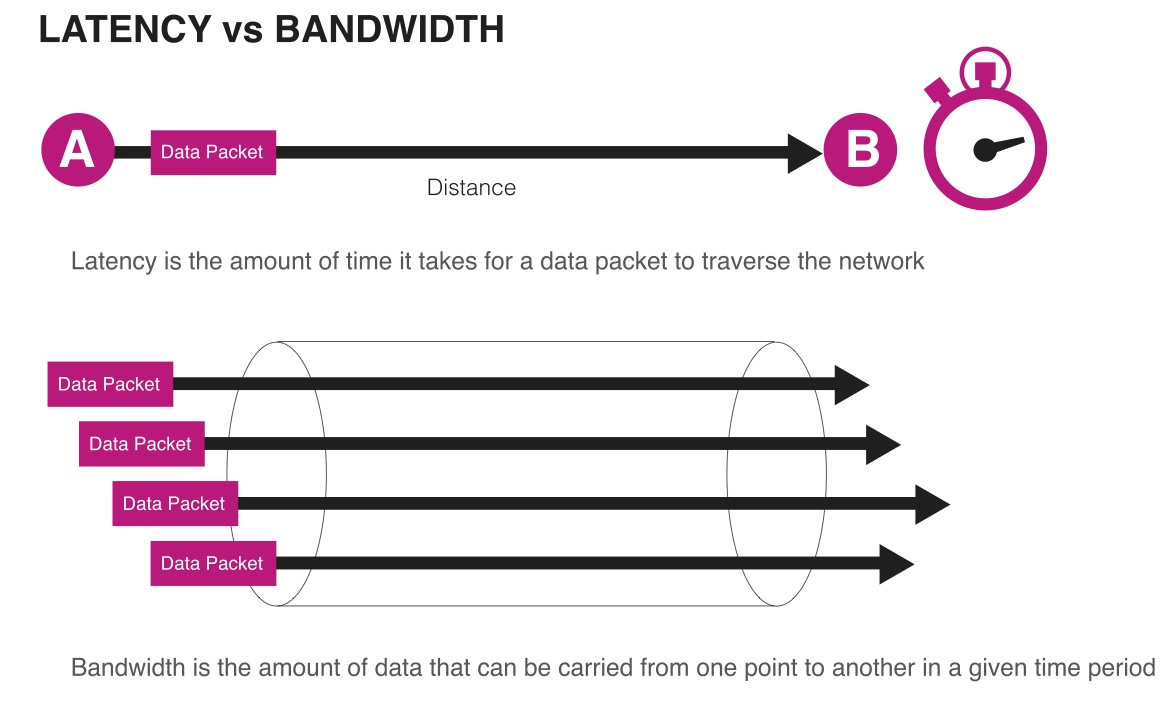

Simply put, bandwidth is the amount of data that can be transmitted over a fixed period of time. Typical bandwidth most users subscribe to range from 5Mbps to 20Mbps.

But despite all the advantages inherent in FTTH connectivity, consumers still routinely wonder why their connections seem to suffer slow speeds, or the websites they visit tend to take a long time to load.

There are several reasons for this. The first is attributable to wrong terminology. Unbeknownst to many users, speed and bandwidth are not synonymous, though many people use these terms interchangeably.

Bandwidth is a measure of capacity while connection speed – or response time – is actually a combination of how much bandwidth a person subscribes to, plus several other factors, including latency, packet loss, the type of application being run, the capcity of the web server, and his or her own equipment's performance.

Thus, if a subscriber experiences slow response or speeds while surfing the Web, the reason isn’t simply just about the bandwidth supplied by the service provider, but could be due to other factors which are likely not in the control of the service provider.

Influencing factors

In general, transmission between a server on the Internet and a client device in a home or office is achieved using Internet Protocol. In this mode of transmission, data is 'packetised' into blocks which are sent to their destination by being routed over the Internet.

Latency is a measure of how long a packet takes to travel from the source to the destination. This is influenced by the distance and capacity between the source and destination, as well as how many hops the data packets have to go through its route over the Internet – the shorter the distance and the fewer the hops, the less the latency.

The second factor is packet loss. Every time a packet is sent out, the source waits for acknowledgement from the destination to signal that it has arrived. A longer wait could be due to faulty devices in the network or because a firewall blocks these packets. Packet loss is the measure of how many unsuccessful packets that did not arrive at the destination as a result of lost packets.

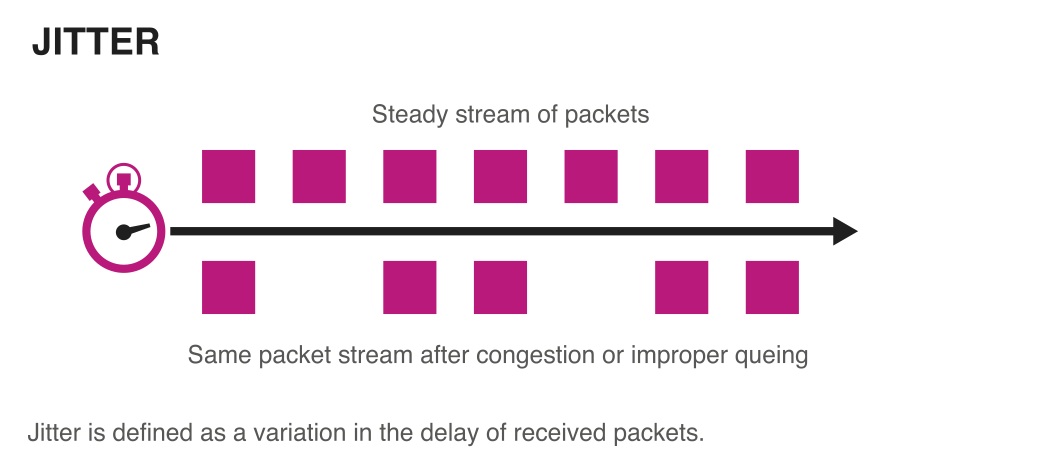

A third factor is jitter, which is the variation in the time between data packets being sent and when they arrive at their destination. This phenomenon is caused by timing delays during IP transmission, and the net effect causes an inconsistent data stream to arrive at its destination.

As can be plainly seen, latency, packet loss and jitter significantly influence the response time for the connection between consumers and the websites they are trying to reach.

Besides these factors, application performance is another big factor. This is where the front and back pages of a website are optimised and presented in the best way possible so that users can reach the website much more efficiently.

Connected with this is the optimisation of the hardware components of the webservers, ensuring that they have enough memory, processor power and networking capacity to receive requests from users, especially during peak periods.

Finally, whilst not directly under the influence of the service provider, users should always ensure that their PC, laptop, tablet or smartphone used to access websites are capable of doing so effectively.

Optimising data transfer

Network researchers have come up with various ways to deal with these challenges and the techniques used are generally known as WAN (wide area network) Optimisation. For example, to deal with latency, engineers use what is known as a caching engine.

A caching engine is a dedicated server that saves data locally instead of over the broader Internet, which helps manage the bandwidth demands and lessens the amount of data sent and received over the Net.

To address packet losses, techniques such as deduplication and compression are used. Deduplication only transfers data that is actually changed within a data packet, thereby eliminating duplicate copies of repeating data being sent across. Compression is the clever use of software code to ensure data transfer is at its highest efficiency before being sent out.

Other techniques used include error correction, which help eliminate lost packets; traffic shaping, which is the ability to control what kind of application gets sent before another; and traffic priority, which prioritises which packet goes before another.

At the end of the day, Internet users must realise that bandwidth is only one of the main components influencing the speed or response time of their Internet connections, and that there are many factors beyond the direct control of the service provider. Understanding this will help users appreciate the intricacies of bandwidth management and help maintain customer expectations.

Besides these factors, application performance is another big factor. This is where the front and back pages of a website are optimised and presented in the best way possible so that users can reach the website much more efficiently.

Connected with this is the optimisation of the hardware components of the webservers, ensuring that they have enough memory, processor power and networking capacity to receive requests from users, especially during peak periods.

Finally, whilst not directly under the influence of the service provider, users should always ensure that their PC, laptop, tablet or smartphone used to access websites are capable of doing so effectively.

Optimising data transfer

Network researchers have come up with various ways to deal with these challenges and the techniques used are generally known as WAN (wide area network) Optimisation. For example, to deal with latency, engineers use what is known as a caching engine.

A caching engine is a dedicated server that saves data locally instead of over the broader Internet, which helps manage the bandwidth demands and lessens the amount of data sent and received over the Net.

To address packet losses, techniques such as deduplication and compression are used. Deduplication only transfers data that is actually changed within a data packet, thereby eliminating duplicate copies of repeating data being sent across. Compression is the clever use of software code to ensure data transfer is at its highest efficiency before being sent out.

Other techniques used include error correction, which help eliminate lost packets; traffic shaping, which is the ability to control what kind of application gets sent before another; and traffic priority, which prioritises which packet goes before another.

At the end of the day, Internet users must realise that bandwidth is only one of the main components influencing the speed or response time of their Internet connections, and that there are many factors beyond the direct control of the service provider. Understanding this will help users appreciate the intricacies of bandwidth management and help maintain customer expectations.